The official US military position on autonomous lethal systems is that there will always be “meaningful human control” in any decision to use lethal force. This position is stated in Department of Defense Directive 3000.09, last updated in 2023. It is also, according to multiple defence researchers, legal scholars, and former military officials, almost entirely theoretical when applied to the systems currently in deployment.

The gap between the policy and the reality is where autonomous kill decisions are already happening.

What “Meaningful Human Control” Actually Means in Practice

The phrase is deliberately vague. It does not mean a human being reviews and approves each individual engagement. It means a human authorised the system to operate within a defined set of parameters, and the system operates within those parameters autonomously.

In practical terms: a human commander authorises a drone to engage any target matching a specific signature within a defined geographic area during a defined time window. The drone then identifies and engages targets matching that signature without further human input. A human set the rules. A machine executes them. Under the DoD’s own framework, this qualifies as human control.

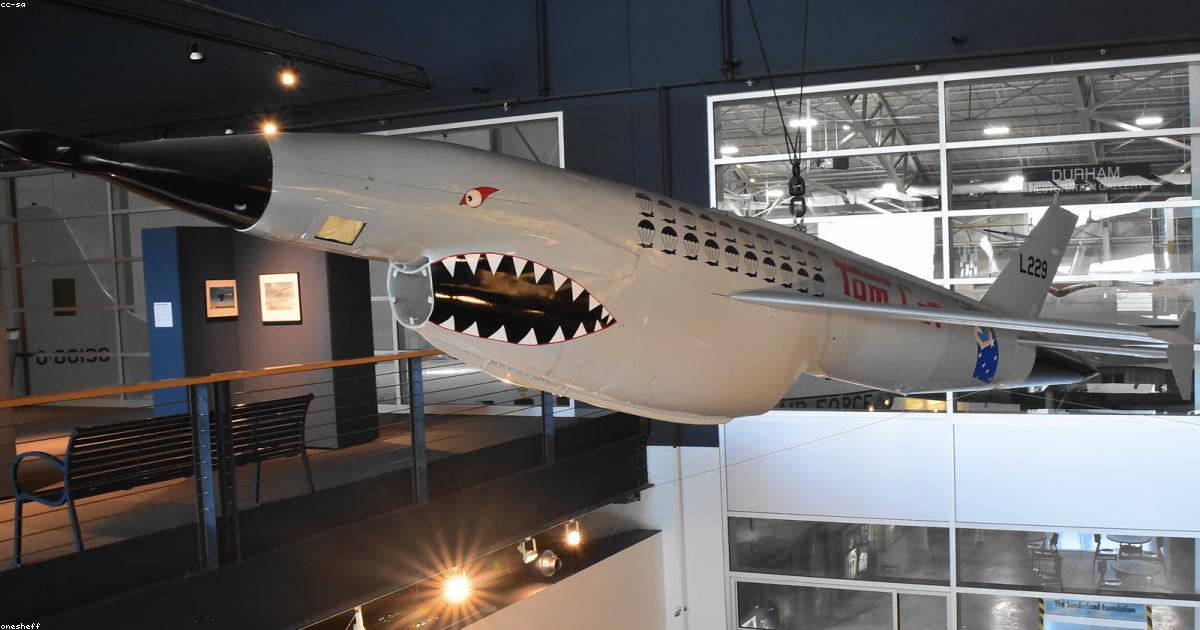

This is not speculation. It is the described operational concept for systems including the ALTIUS-600 loitering munition, used by US forces and exported to partner militaries, and the Switchblade drone system, which has been deployed in active conflict zones.

The Targeting Algorithm Problem

The machine learning models that identify targets are trained on data. The quality and representativeness of that data determines what the system considers a valid target signature. In counterinsurgency environments where combatants and civilians often look identical from the air, the margin for error is existential. An algorithm that misclassifies at a 2 percent error rate in a statistical benchmark will kill innocent people in the field.

Project Maven, the Pentagon’s AI targeting programme that caused an internal revolt at Google when its involvement was revealed in 2018, continues to operate. OpenAI’s recent contract with the Pentagon includes work on target identification and battlefield analytics. The companies building these systems have financial incentives to oversell their accuracy and understate their limitations.

The International Law Question Nobody Is Asking Loudly Enough

International humanitarian law requires that the decision to use lethal force be made by someone who can exercise judgement — who can assess proportionality, distinguish combatants from civilians, and be held accountable. An algorithm cannot be held accountable. It cannot be prosecuted for a war crime. It cannot be deterred by the threat of consequences.

This creates a structural accountability gap that legal scholars describe as one of the most serious emerging issues in international law. If an autonomous system kills civilians in violation of the laws of war, who is responsible? The programmer? The commander who authorised deployment? The procurement officer who bought the system? The company that built it?

The answer that powerful militaries prefer is: nobody, because the system was operating within its designed parameters and any failures were statistical rather than intentional. That answer has no place in a framework built on individual accountability for the use of force.

Why This Is Moving Faster Than the Debate About It

The military advantages of autonomous systems are real and significant. They are faster than humans in targeting decisions. They do not experience fear, fatigue, or moral hesitation. They can be produced at scale without the training cost of human soldiers. Any military that unilaterally restrains itself from deploying them faces an adversary that will not. This creates a race dynamic that is almost impossible to break without multilateral agreement, and multilateral agreement on autonomous weapons has stalled completely at the UN level since 2014.

The debate about whether to allow autonomous lethal AI is largely over. The systems are built and deployed. The debate that matters now is whether any accountability framework can be created retroactively — and whether the governments most invested in these systems have any real interest in creating one.